The source of truth, for your enterprise.

We partner with industrial enterprises to extract a customer-owned source of truth over your data, code, and processes - then ship the chat, agents, journeys, and migrations on top.

Trust earned the long way

Built by the founders of Vizuara AI Labs, $2M ARR, 20,000+ students, 400K+ followers. CEOs, CTOs, and engineering managers come to us through our courses; we partner with their companies on what comes next.

Founders' enterprise engagements

Legacy enterprises can't deploy AI on a substrate that doesn't exist

Before chat, before agents, before migrations, there has to be a source of truth. Most companies don't have one.

The data substrate is broken

Decades of undocumented schemas, ERP customisations, business rules in heads, and hundreds of CSVs nobody fully maps. The ground truth exists, but nowhere you can query.

AI on top of it hallucinates

Off-the-shelf RAG and chat agents give plausible-sounding wrong answers on enterprise data. No ontology, no eval, no source-of-truth, every demo looks great until production.

System integrators take 18 months

And what they ship is bespoke, opaque, and theirs. Your team can't extend it; your data isn't portable; the moment they leave, the system rots.

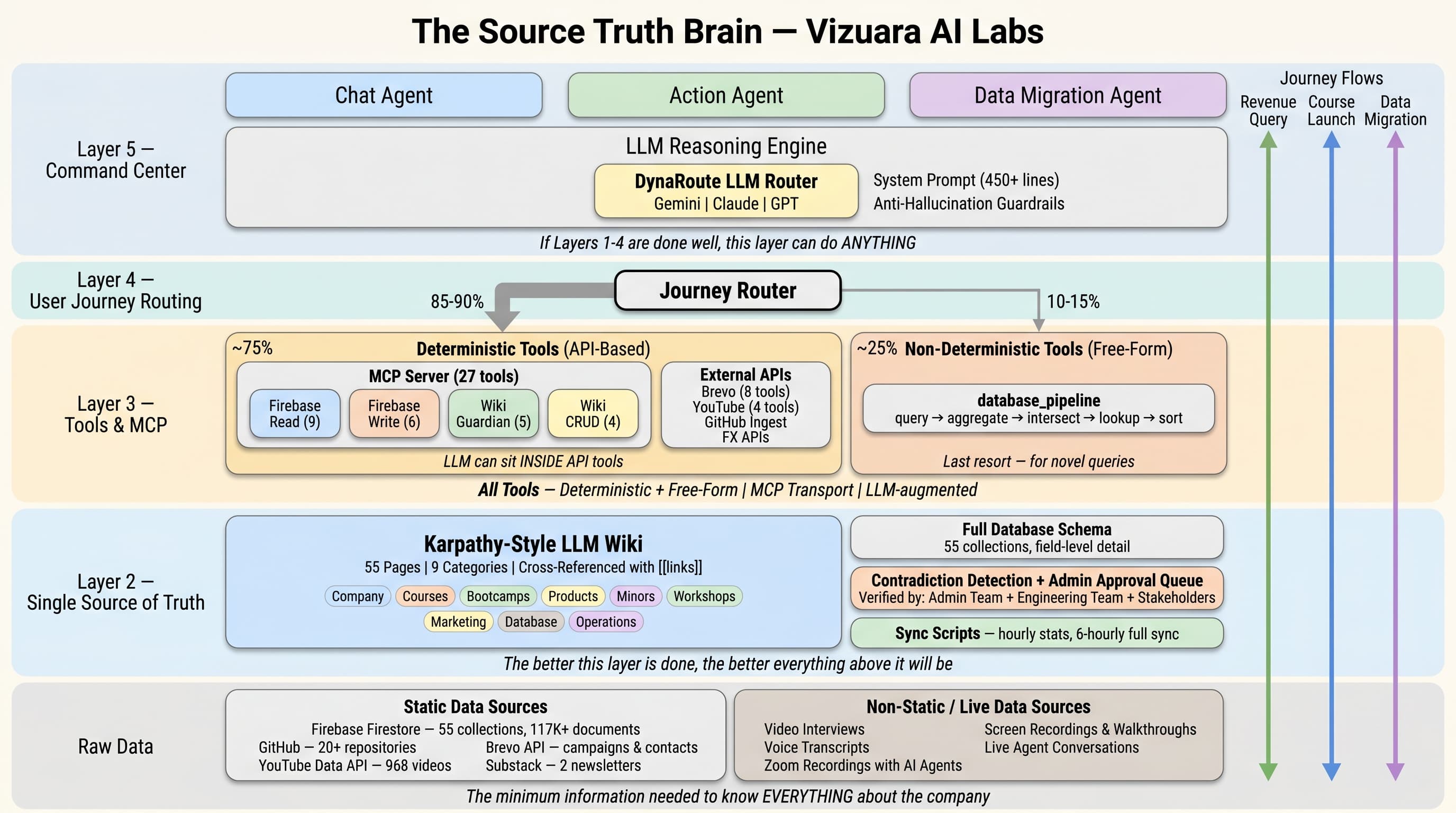

Six layers, one source of truth

A repeatable methodology that turns a tangle of legacy data into a queryable, evaluatable source of truth, owned end-to-end by you.

Data

We audit every schema, table, CSV, and code path your enterprise runs on. Output: a queryable source-truth substrate (SQLite or Postgres) with full provenance.

Knowledge Graph

Entities, relationships, and rules, extracted from data and from human SMEs in the loop. Ontology-first, materialised as a graph artifact.

Wiki

A Karpathy-style cross-referenced markdown wiki, domain, ontology, schema, rules, references, every page traceable to source rows. Customer-readable.

SDK

A typed Python + TypeScript SDK over the source truth, search, traverse, query, plus an MCP server so agents can call your enterprise as a tool.

Journeys

Deterministic, multi-step workflows built on the SDK. Discrepancy resolution, work order routing, migrations, declarative, debuggable, testable.

Chat

A conversational agent grounded in the wiki + graph + SDK, with a 100-question ground-truth eval that gates every release. No hallucinations to production.

Continuous change propagation: source systems update, audit re-runs, graph rebuilds, wiki pages regenerate, eval re-runs. The source truth stays in sync.

The source truth is yours, not ours.

Every artifact, the database, graph, wiki, SDK, journeys, eval, ships into your repos, your cloud, your control. We design for portability from day one. When the engagement ends, your team picks it up and keeps building.

- Open, inspectable artifacts (Markdown, JSON, SQL, Python, TypeScript)

- No vendor lock-in, no proprietary blob, no "call us to update it"

- Eval harness so your team can measure correctness over time

brain/ ├── db/ # SQLite + provenance ├── graph/ # graph.json + ontology ├── wiki/ # 326 cross-referenced pages ├── sdk/ # Python + TypeScript │ └── mcp_server/ # JSON-RPC 2.0 ├── journeys/ # YAML + markdown twins ├── eval/ # 100-question ground truth └── README.md # your team's runbook

Ramco Aviation Brain

We built this for Ramco, a publicly-listed, ~$275M market-cap enterprise software company that powers cloud-based ERP, global payroll, aviation MRO, logistics, and HCM for airlines, operators, and fleets worldwide. We took their decades-old MRO data substrate end-to-end into a deployed, evaluatable source of truth.

Two production engagements

Service Bulletin LLM ingestion + Knowledge-Graph–assisted RAG over a 4,000+ table SQL estate.

Forward-deployed engineers

Embedded engineering team, on-site and remote, operating inside the customer's environment with their data, their constraints.

Data migration in flight

Active migration work moving legacy schemas into the new source-truth-aware substrate without breaking the analytics consumers downstream.

Audit + database

152 source CSVs, ~605 MB unified SQLite. Full schema audit + provenance, every row traceable to its origin file.

Knowledge graph

graph.json with entities (aircraft, work orders, parts, employees), relationships, and operational rules, extracted from data + SMEs.

326 Karpathy-style pages

Markdown wiki across domain, ontology, schema, rules, references, relationships, fully cross-referenced with [[wiki-links]] and traceable to source rows.

Python + TypeScript SDK + MCP server

Mirror SDK implementations + an MCP server (JSON-RPC 2.0, stdio) so any agent can call the Ramco source truth as a tool. FTS5 BM25 search built in.

Two end-to-end journeys

Discrepancy resolution + Shop work-order repair. Markdown specs with executable YAML twins, running against a mock Ramco runtime (FastAPI).

Gemini 2.5 Flash chat, eval-gated

Function-calling chat agent grounded in the source truth. 100-question ground-truth eval gates every release.

Try the deployed source truth

Walk through journeys, query the wiki, chat with the agent, and see the ground-truth eval, all running against a real aviation MRO dataset.

Open the demoGround-truth eval

100 hand-curated questions with verified answers. The chat agent passes 95 of them, and every release re-runs the full suite.

See the eval resultsStack used

- SQLite + FTS5 (BM25)

- Next.js 16 + React 19 (Vercel)

- Gemini 2.5 Flash · function calling

- MCP server (JSON-RPC 2.0)

- Python + TS mirror SDKs

Enterprise AI engagements before Veritaserum

The Source Truth methodology didn't appear from nowhere. It emerged from years of shipping invasive, ground-truth enterprise AI work, across aviation MRO, SAP, and computer vision.

Automated Service Bulletin Integration

LLM system that parses unstructured OEM bulletin PDFs and maps inspection directives into a maintenance database with thousands of tables. Replaces manual data entry that was slow and error-prone.

Knowledge-Graph Assisted RAG

Two-stage architecture for conversational analytics over a 4,000+ table SQL estate. Knowledge graph synchronised in parallel with SQL, improved retrieval precision, join correctness, latency, and cost.

RAG Automation of SAP Process Design Documentation

Working prototype that generates the full Hire-to-Retire PDD by retrieving from SAP best-practice libraries and prior project assets. Faster delivery, higher consistency, dramatically less manual drafting.

Clean Core Enablement for SAP S/4HANA

Audit of every custom object in a legacy SAP estate; LLM-assisted analysis to identify redundant customisations and route necessary extensions to on-stack vs side-by-side. Lean, upgrade-friendly core.

The Ramco Aviation Brain (the live case study above) is the V1 of the Source Truth methodology, packaged, productised, and built to be the standard playbook across these verticals.

What CEOs and CTOs say about working with us

Two of the engineering leaders who've shipped enterprise AI alongside our team.

The team demonstrated strong technical expertise and a structured, thoughtful approach. They evaluated multiple options, made data-driven decisions, and delivered a practical, end-to-end solution with clear attention to performance and usability. We value the partnership and would confidently recommend them for any AI/ML and computer vision initiatives.

Rajesh Pillai

Founder & CTO · Algorisys

Vizuara proved to be the ideal AI development partner for building our SCMT platform. Selecting the right LLM, intelligent LLM chaining for multi-layered code analysis, architecting a powerful RAG system. Deep understanding of enterprise requirements and innovative approach to chaining multiple AI models accelerated our go-to-market significantly. We highly recommend them for organisations seeking world-class AI engineering capabilities.

Kalpesh Chavda

Co-Founder & Director · Diligent Global

We are the founders of Vizuara AI Labs

Veritaserum didn't start cold. It started with four years of teaching AI to industrial enterprises, and a list of CTOs who already trust us with their hardest problems.

Founders teach AI to industry

Through Vizuara AI Labs and our industrial wing First Principle Labs, we train CEOs, CTOs, engineering managers, and software engineers across enterprises.

Trust compounds

Our students aren't just learners, they're decision makers. They watch our YouTube, take our courses, send their teams to our bootcamps. We become the AI team they call.

Enterprises bring us their hardest problem

When a CTO needs to deploy AI on top of two decades of legacy data, they come to us. The Source Truth Company is what comes after the trust is earned.

Built for legacy industrial enterprises

We start with aviation MRO, where Ramco is our anchor, and expand into adjacent regulated, asset-heavy verticals where the source of truth is locked in legacy systems.

Aviation MRO

Maintenance, repair, and overhaul operations on legacy ERPs. Decades of work-order data, regulatory artefacts, and shop-floor rules. Ramco is our flagship.

Industrial Manufacturing

Mid-to-large discrete and process manufacturers running on customised SAP, Oracle EBS, JD Edwards, or in-house systems with decades of business logic embedded in stored procedures.

Defense & Aerospace

Programme-management, supply chain, and certification data living across siloed systems with hard regulatory boundaries. Source-truth pipelines slot under existing controls.

Energy & Utilities

Asset-heavy operators with SCADA logs, GIS, work management, and regulatory reporting that have to stay in sync. The source truth closes the gap.

Pharma Manufacturing

Batch records, deviations, and CAPA flows tied to SOPs and regulatory submissions. Source-of-truth is not optional, it's a 21 CFR Part 11 requirement.

Legacy Enterprise IT

Banks, insurers, and government bodies sitting on mainframes, COBOL, and decades of undocumented schemas. Migration projects that have been stuck for years.

The pattern is the same in every vertical: data trapped in legacy systems, rules in heads, an LLM project that won't make it past PoC. We build the substrate.

Authored, taught, watched 50 million times

The founders teach what they build. Manning best-sellers and hundreds of hours of original technical curriculum, watched by the same engineers and CTOs whose enterprises hire us.

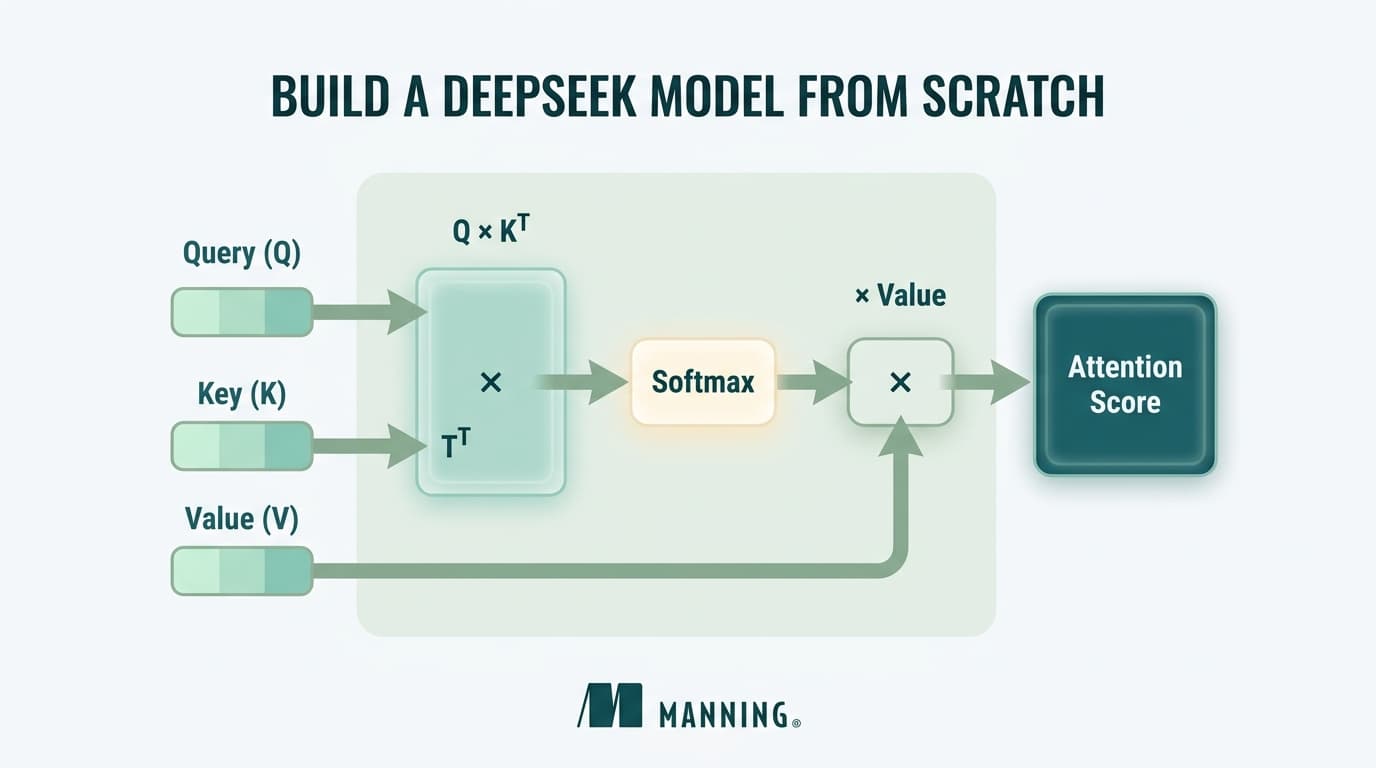

Build a DeepSeek Model (From Scratch)

Manning best-seller written by all three co-founders. End-to-end DeepSeek-style architecture, training, inference.

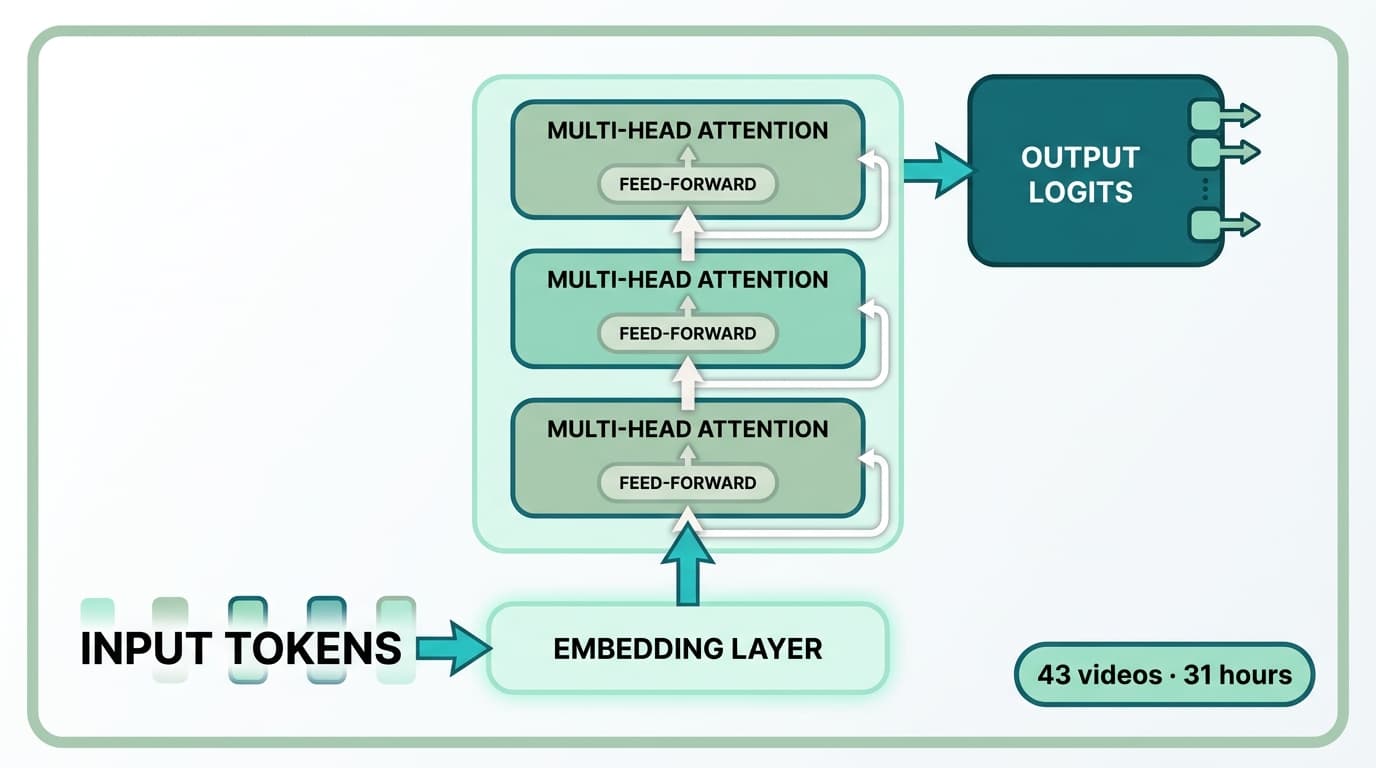

Building LLMs from scratch

Dr. Raj Dandekar's full curriculum: Transformers, tokenizer, attention, pre-training, fine-tuning. Built from the ground up.

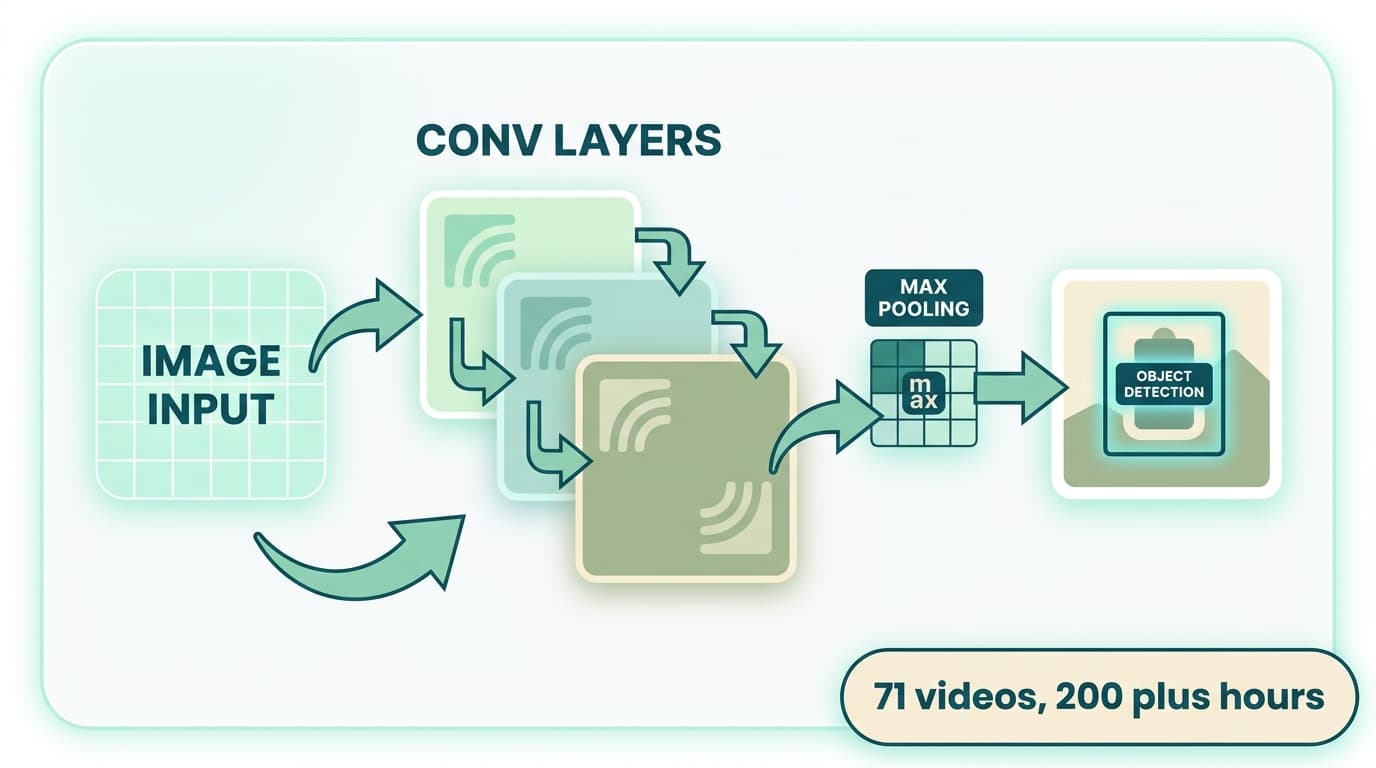

Computer Vision from Scratch

Dr. Sreedath Panat. CNNs, YOLO, segmentation, ViT, GANs. The standard reference, with deep math.

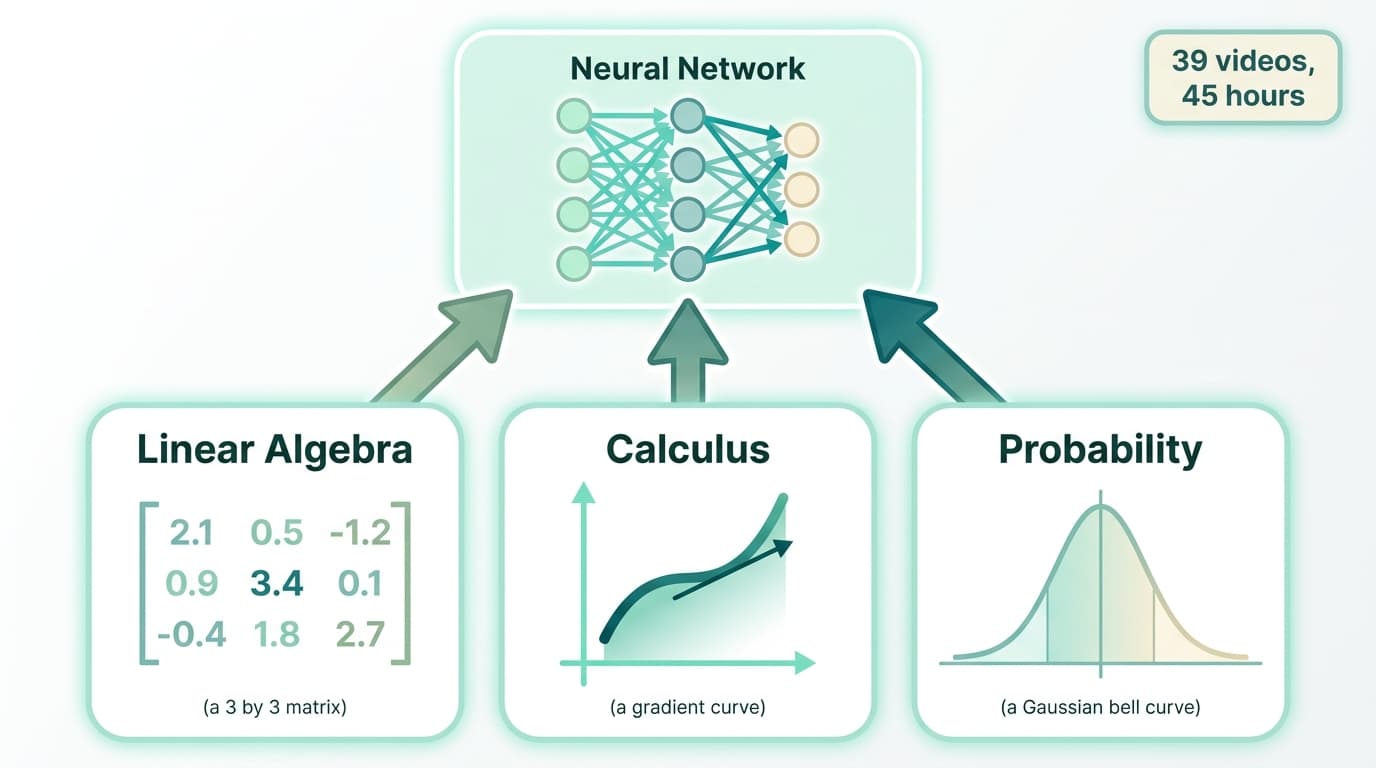

Foundations of AI and ML

Dr. Sreedath Panat. Linear algebra, calculus, probability, neural networks, backpropagation: the math foundation behind every model.

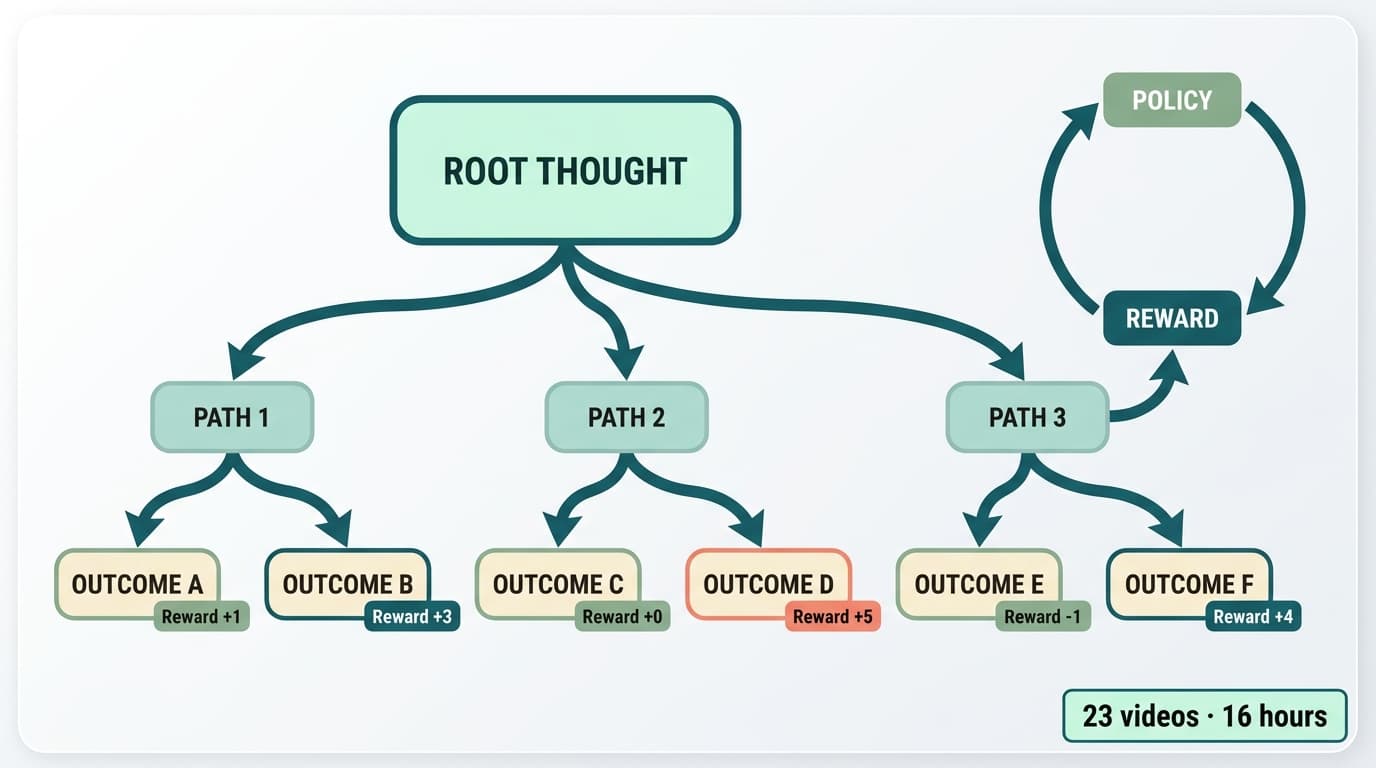

Reasoning LLMs from Scratch

Dr. Rajat Dandekar. Chain of Thought, RLHF, PPO, Tree of Thoughts, process reward models: the post-training stack.

Built by MIT, Purdue & IIT alumni

Three co-founders, full-time on Veritaserum. Built Vizuara AI Labs to $2M ARR teaching enterprise AI, now building the source of truth for those same enterprises.

All three co-founders are exclusively full-time on Veritaserum.

No consulting on the side, no advisor roles, no part-time anything. Veritaserum is where every working hour goes. Vizuara AI Labs continues to operate as the founders' education company, but the build team is dedicated here.

Dr. Sreedath Panat

Co-founder

PhD MIT, B.Tech IIT Madras. 10+ years in research and applied AI. Leads the methodology and delivery side of the Source Truth pipeline.

Dr. Raj Dandekar

Co-founder

PhD MIT, B.Tech IIT Madras. Builds LLMs from scratch, including DeepSeek-style architectures. Drives the chat, agent, and journey layers.

Manning #1 Best-Seller

Build a DeepSeek Model (From Scratch)

By Dr. Raj Dandekar, Dr. Rajat Dandekar, Dr. Sreedath Panat & Naman Dwivedi

A 4–8 week pilot, then continuous

Fixed-fee scoped pilot to deliver the V1 source truth. From there, a continuous engagement that expands coverage and ships agents on top, with your team progressively taking the wheel.

Audit & Scope

- Map every system, schema, and code path

- Identify the 2–3 priority workflows for V1

- Stand up the data substrate (DB + provenance)

- Sign off on ontology and rules with your SMEs

Build the source truth

- Knowledge graph + Karpathy-style wiki

- SDK + MCP server in your repos

- First two journeys + ground-truth eval

- Chat agent grounded against the wiki

Expand & Hand-off

- Onboard your team on the methodology

- Expand source-truth coverage workflow by workflow

- Continuous eval gating every release

- Optional: ship the production agent platform

We're not a per-seat SaaS. Pricing is fixed-fee for the pilot and retainer for the expansion phase, talk to us and we'll walk you through what a Ramco-shaped engagement looks like for your data.

Let's build the source of truth for your enterprise.

Bring us the dataset that nobody on your team can fully map. We'll give you back the source truth, yours, evaluatable, and ready to ship agents on top.

Fixed-fee 4–8 week pilot

Forward-deployed engineers, on-site if needed

Customer owns every artifact